Key Points

-

Artificial intelligence (AI) development occurs primarily in large, centralized data centers.

-

Nvidia is a leading supplier of graphics processing units (GPUs), essential for AI workloads.

-

Micron Technology supplies high-bandwidth memory (HBM) for data centers, critical for GPU performance.

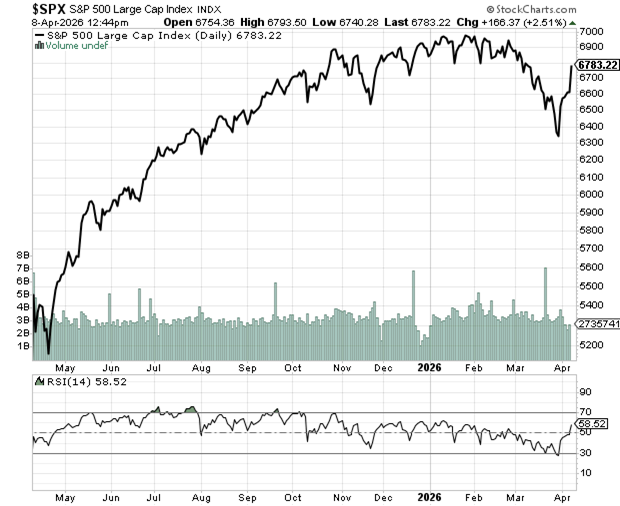

Nvidia generated a record $215.9 billion in revenue in fiscal year 2026 and has seen its stock rise significantly due to the AI boom. The company is set to release its powerful Vera Rubin semiconductor platform that could reduce GPU requirements for training AI models by 75%, leading to a 90% decrease in inference token costs. This emphasizes Nvidia’s growing role in the AI industry.

Meanwhile, Micron reported impressive fiscal results with total revenue hitting $23.9 billion in Q2 2026, marking a 196% year-over-year increase. Micron’s new HBM4 solution provides 60% more capacity and 20% better energy efficiency, positioning it well for AI applications beyond data centers as demand for AI-enabled consumer devices increases.